What could possibly go wrong?

By Aaron Kesel. Published 3-10-2020 by The Mind Unleashed

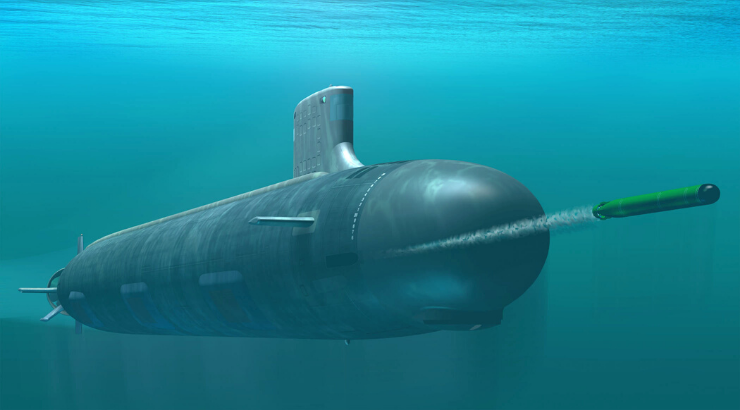

Photo: Wikimedia Commons

The U.S. Navy is secretly developing armed robot submarines that are controlled by onboard artificial intelligence (AI) which could potentially kill without explicit human control.

The Office of Naval Research is involved with the development of an AI system called CLAWS, which the agency describes in budget documents as an autonomous undersea weapon system for clandestine use. CLAWS will “increase mission areas into kinetic effects,” they write.

According to a report by New Scientist, details of the killer submersible were made available as part of the 2020 budget documents. However, not much more is known.

Very few details were publicly provided about the “top secret” project beyond the fact it will use sensors and algorithms to carry out complex missions on its own.

It is expected that CLAWS will be installed on the new Orca class robot submarines that have 12 torpedo tubes which are being developed for the Navy by Boeing. But that is simply speculation. What is known is that the system will be able to think and kill on its own. Although according to New Scientist there are hints by the Navy that this will be the case.

“The Orca will have well-defined interfaces for the potential of implementing cost-effective upgrades in future increments to leverage advances in technology and respond to threat changes,” the Navy said.

“The Orca will have a modular payload bay, with defined interfaces to support current and future payloads for employment from the vehicle.”

Autonomous submarines already exist today and can complete tasks without human involvement. However, they lack intelligence and have limited functionality. Anything more complex than a mere location task requires a human operator to work via a remote communications link.

The new submarines will have a much greater level of artificial intelligence and be able to perform a wider range of functions without a human controller.

CLAWS was first revealed in 2018 as part of a U.S. Navy bid to “improve the autonomy and survivability of large and extra-large unmanned underwater vehicles,” according to New Scientist.

When it was first revealed, there was no mention then of weapons being on the autonomous submarine, only a need for it to have sensors and to be able to make decisions.

Stuart Russell from the University of California Berkeley told New Scientist it was a “dangerous development” and use of AI. “Equipping a fully autonomous vehicle with lethal weapons is a significant step, and one that risks an accidental escalation in a way that does not apply to sea mines.”

In “Mind the Gap The Lack of Accountability for Killer Robots,” Human Rights Watch (HRW) poses and important question: what happens when a robot accidentally commits a war crime? The answer thus far is nothing, as there is no accountability.

“No accountability means no deterrence of future crimes, no retribution for victims, no social condemnation of the responsible party,” said Bonnie Docherty, senior Arms Division researcher at the HRW and the report’s lead author at the time.

As TMU reported people like Brandon Bryant whom controlled drones for the U.S. military, have a conscience while robots will kill without remorse. Since 2013, there has been a campaign to Stop Killer Robots encouraging countries to preemptively ban fully autonomous weapons.

This article is published under a Creative Commons Attribution 4.0 International license